It’s a reasonable question. But it often assumes that “AI” is a single, coherent capability that should be applied broadly across a product. In practice, that’s not how these systems behave.

A lot of what is currently being built into SaaS products falls into two very different categories. Both sit under the same umbrella. Both are forms of AI. But they solve different problems, and using them interchangeably creates issues.

On one side are large language models, such as those developed by OpenAI and Anthropic. These are very effective at working with language. They can draft responses, summarise content, and extract structure from free text. They are flexible, but they are also probabilistic and relatively expensive to run.

On the other side are statistical and machine learning models. These are also AI. They are used to estimate risk, adjust for differences between populations, and detect meaningful change in data. They are far less visible, but they are deterministic, explainable, and cheap to run at scale.

In sectors like social care, that distinction matters. Most of the core questions are not open-ended. They require consistent, explainable answers that can be understood and justified. That places a premium on reliability and transparency over flexibility.

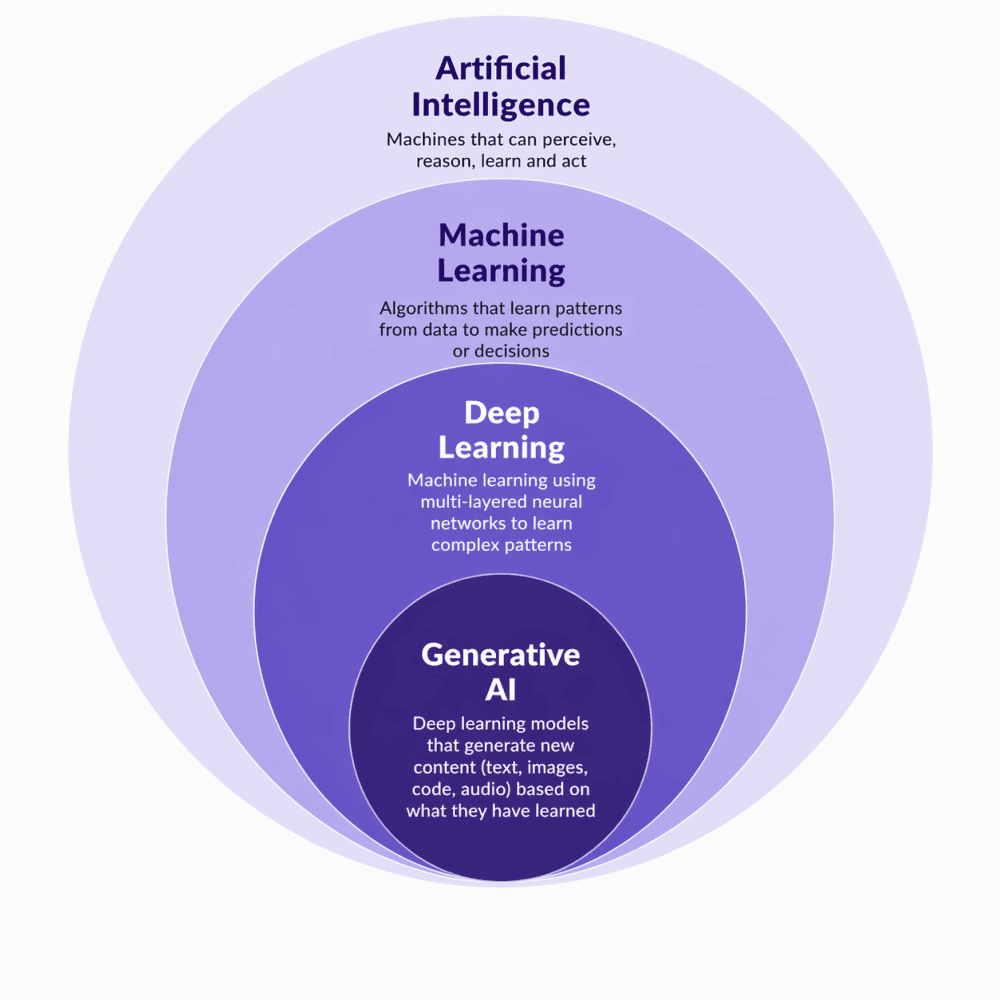

AI includes a range of approaches. Generative AI is one subset, not a universal solution, and using the right method for the problem matters.

Where this breaks in practice

What’s happening at the moment is that many products are defaulting to one type of AI even when the problem is better suited to another. You end up with systems that can “interrogate your data” and “generate insights”, but only by repeatedly sending structured information through expensive, probabilistic models.

And in some cases, it’s even more direct.

As Loucas Gatzoulis, our Group CTO, put it:

“I think it’s become a bit of a default pattern. Take a database, point a language model at it, and call it AI-enabled. The problem is that it says more about the product than the technology. If you don’t understand the questions your users actually need answered, the fallback is to let the model figure it out. That might look impressive, but it’s inherently inefficient. You’re using a very expensive tool to compensate for a lack of structure. Over time, that becomes difficult to sustain, particularly in a sector like aged care where cost discipline matters.”

Part of what sits underneath this is more fundamental. In many systems, the underlying data is not structured in a way that supports clear, repeatable analysis. The questions that matter are not well defined. In that context, pointing a general-purpose model at the data becomes a workaround. It fills the gap, but at a cost.

That cost is not just financial. It also shifts the burden away from understanding the domain and the data. Using statistical and machine learning models well requires a clear view of what you are trying to measure, how variables relate to one another, and whether the outputs make sense in practice. That level of discipline is harder, and it is not always visible in early AI implementations.

Reliability and accountability

There is also a reliability issue. These systems are not deterministic. The same input can produce different outputs, and the pathway from input to answer is not always transparent.

That makes it difficult to reproduce results or explain how a conclusion was reached, which matters in environments like health and social care where decisions need to be understood, justified, and, at times, challenged.

In aged care, most of the core questions are not open-ended.

Are outcomes worse than expected given the resident population? Where is risk increasing? Which residents are most likely to deteriorate? Are we meeting regulatory expectations?

These are structured problems. They benefit from models that are designed to answer them consistently.

A more deliberate approach

That leads to a more targeted approach.

Use generative models where language is the constraint. Drafting responses, summarising notes, or extracting information from unstructured inputs. These are bounded use cases where the value is clear.

That also extends to more conversational interfaces. For example, supporting staff to complete forms through guided, interview-style interactions rather than structured fields, or enabling residents and clients to respond to surveys in their preferred language. In home care, language barriers are a practical limitation. Earlier quality indicator pilots have been restricted to English-speaking participants, which is not reflective of the population. These are areas where language models can play a useful role, particularly in multilingual contexts, by reducing friction in how information is captured rather than attempting to interpret it after the fact.

Use statistical and machine learning models where understanding the result matters as much as the result itself. Estimating risk, adjusting for case mix, and identifying meaningful variation all fall into this category. In these cases, transparency is not optional. You need to be able to see how a result is produced, test whether it makes sense, and assess whether it aligns with clinical and operational expectations.

If a model suggests that one group is at lower risk than another, that should be something you can interrogate. Not just accept.

Avoid applying large models to problems that are already structured. In those cases, they tend to add cost without improving the quality of the answer.

The economics still matter

There is also a practical consideration around how these systems are consumed. Traditional SaaS pricing is generally fixed or per bed. The cost of large model usage scales with how often they are called. If a product encourages open-ended interaction, it also creates unbounded cost. More queries means more model calls, and more model calls means higher cost.

Current pricing for these models is not necessarily reflective of a long-term steady state. It is shaped by competition and investment, and may not hold. Where products rely heavily on continuous model use, that creates exposure.

What sits underneath this

A more durable approach is to be deliberate about where these capabilities are used. Define the questions that matter. Structure the data so those questions can be answered reliably. Build the underlying analytics to support that. Use AI selectively where it provides clear leverage.

For us, that’s not just a technical decision. A significant part of the work sits earlier. Getting the data model right. Ensuring consistency. Designing systems around the questions that actually need to be answered. Those foundations are what make more advanced use of AI viable, and sustainable, over time.

Structure enables insight. When data and questions are clear, the right models can deliver reliable, explainable answers. Without it, we end up point expensive AI at a mess.

Health and social care is already operating within tight financial constraints. Introducing models that create unpredictable or escalating costs doesn’t just affect vendors, it flows directly through to providers.

There is a lot to be gained from these technologies. But using them without clear boundaries, or without aligning them to specific use cases, is unlikely to be sustainable.

That is a narrower definition of AI than what is often presented.

But it is more aligned with how these systems behave in practice, and with what customers ultimately need.